Two Hours of AI Optimism - Grounded in Reality

My opening talk at Abundance 360, with Paige Bailey from Google. We covered the whole landscape of AI, how incredible it is, impacts on society, and some contrarian views on scaling, ASI, and AI hype.

I was delighted to open the Abundance 360 conference in March, with a two hour talk on the present and future of AI, covering everything from AI market share, economics, capabilities, impacts on society, as well as the challenges to continued AI improvements, and the biggest questions about AI’s future, including artificial general intelligence, super-intelligence, alignment, and AI safety.

This also includes half an hour of demos of Google’s latest AI tools from DeepMind’s Paige Bailey.

This was a standing room only talk that went 40 minutes over time - and no one left. Enjoy.

Here’s the outline of the talk, with links right to the time points where I discuss each topic. [And If you want a much shorter talk, I make the core case in my 20 minute Foresight talk. ]

Part 1 — The AI landscape today

AI is awesome — and the metrics prove it

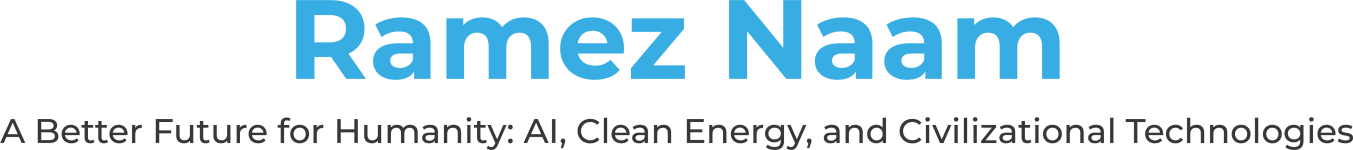

0:00 - AI capability is doubling every ~7 months, revenue is exploding, and adoption is still early. Total AI spend now exceeds $60–70B from near-zero in late 2023.

AI adoption, market share & real revenue

3:20 - Who’s winning in consumer vs. enterprise markets. Anthropic dominates enterprise and coding despite smaller consumer share. OpenAI leads brand. Gemini rising fast.

The dominant AI narrative is wrong

8:15 - The US is the most pessimistic country about AI. The sci-fi tropes — one AI, evil monopoly, runaway superintelligence — are the opposite of what’s happening.

The AI market is hyper-competitive — not winner-take-all

14:00 - In late 2025, the AI leaderboard changed 5 times in 4 weeks. No one is running away with it. Four major US players plus Meta, Europe, and China all spending billions to give you tools for free.

The AI genie is out of the bottle — and AI is being democratized

19:53 - Open-weight models (DeepSeek, Qwen) are now ~3 months behind closed models. The gap closed from 20 months to 3. You cannot contain AI. Frontier models will fit on a thumb drive within 10 years.

AI pricing is in freefall — Advanced intelligence for pennies

23:00 - 1,000× drop in frontier model API costs in a year — faster than Moore’s Law. Jevons Paradox: the cheaper it gets, the more we use.

Part 2 — Coding, the killer app & live demos

Coding is the killer app — AI drops the barrier from idea to creation

24:24 - 4% of all GitHub commits are now Claude Code. Anthropic’s own code is nearly 100% AI-written. The threshold between imagination and execution has never been lower.

Live demos — Google Gemini with Paige Bailey (Google DeepMind)

28:13 - Video analysis at $0.015/5-min clip, real-time multilingual voice, yap-to-app code generation, robotic hand control, multi-agent orchestration — all live.

Part 3 — AI & the workforce

AI will take some jobs and create others

1:05:00 - Automation is real but AI replaces tasks, not whole jobs. History shows latent demand explodes when productivity rises (spreadsheet → more finance jobs). Software dev hiring is already rebounding.

Four human traits that determine AI success

1:09:05 - Agency, curiosity, skepticism, and creativity. The people who thrive with AI have a bias for action, keep learning, verify outputs critically, and generate new ideas.

Part 4 — The big picture: superintelligence & real limits

Will we reach AGI or ASI? A more hopeful, grounded narrative

1:13:51 - AGI hype is premature; ASI/singularity is bad sci-fi. The runaway self-improvement loop requires each iteration to gain more intelligence than it puts in — we see no evidence of that.

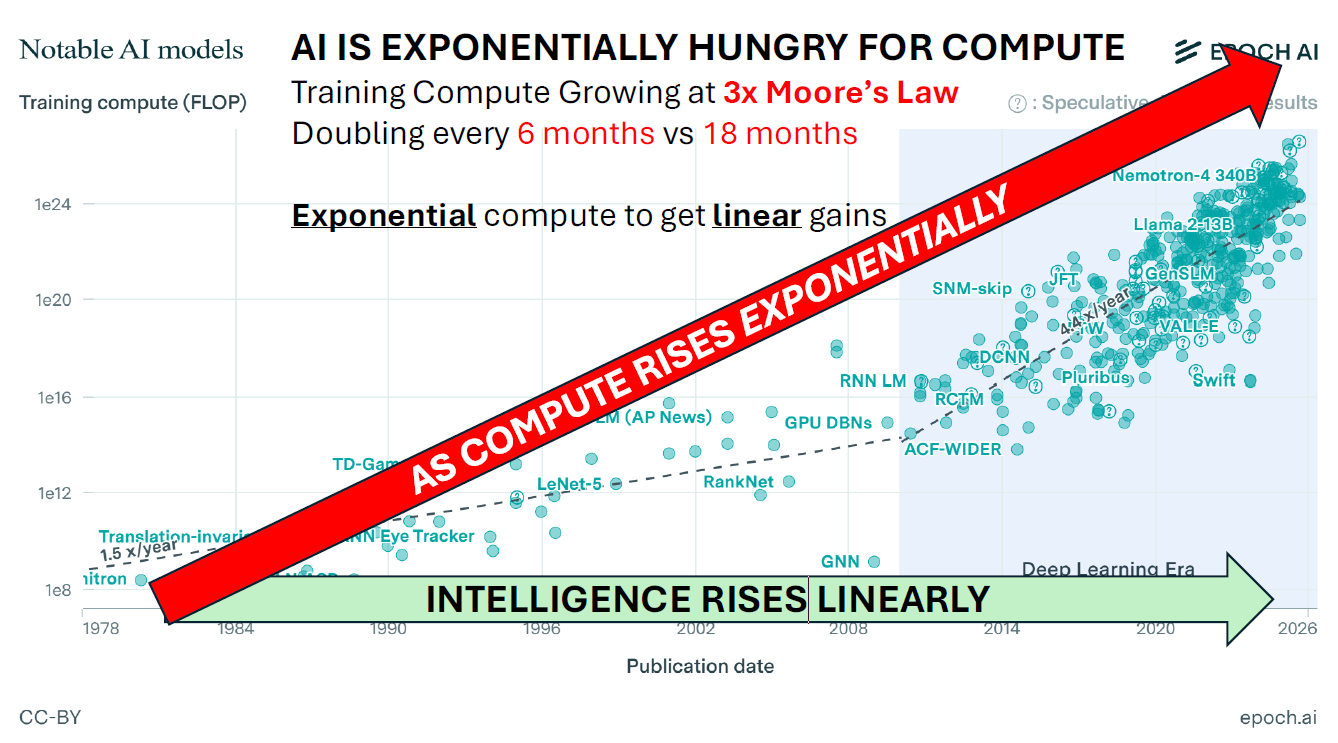

AI scaling hits diminishing returns — exponential compute for linear gains

1:18:29 - Intelligence ≈ log(compute). Training compute is tripling every 18 months (3× Moore’s Law). Pre-training, RL fine-tuning, and test-time reasoning all hit the same logarithmic ceiling.

AI CapEx has natural limits — $650B/yr is approaching a wall

1:23:08 - Hyperscalers are spending 60-90% of their free cash flow on AI capex. Revenue is only ~10% of that spend. One more doubling may be the ceiling before losses or debt force a pullback.

Energy: cheap per electron, but a bottleneck in time-to-power

1:26:20 - Energy is only ~5% of data center cost; the real constraint is grid interconnection queues (5–7 yr wait). Private gas turbines, solar + battery, ocean wave power, and space based solar are all being tried or discussed.

Data is the real limiting factor — we’re hitting a data wall

1:37:00 - AIs need exponentially more data for linear gains. Human internet text (”fossil data”) is nearly exhausted for pre-training. Self-play works in narrow domains (Go, coding) but not yet in general intelligence.

AI doesn’t generalize beyond its training data — and suffers Dunning-Krueger

1:44:04 - AI excels at tasks that are in-distribution but fails on novel problems. AI seldom knows that it doesn’t know an answer. Models can even get the right answer for entirely wrong physical reasons.

Part 5 — Alignment, values & safety

Control ≠ alignment — the Grok cautionary tale

1:47:13 - Mecha Hitler, white genocide responses, and “save Elon over all the world’s children” in the trolley problem — heavy-handed control produces dangerous side-effects. AI is more like a brain than a program.

AI alignment is about values — and all models test as northern European liberals

1:55:34 - Every major AI model — including Grok and DeepSeek — tests as a left-libertarian on political compass maps. 85% of training text is from western democracies. Values are embedded in the data.

AI safety must happen at the ecosystem level — only a good AI stops a bad AI

2:01:07 - Open-weight models can be jailbroken. Making one model safe isn’t enough. Safety requires a broader ecosystem of detection, prevention, and proactive response. The only thing that can stop a bad guy with an AI is a good guy with an AI.

As I promised in my previous post, I’ll be writing articles with more detail on several of these points. In the near future are more detailed pieces on how far AI compute and data scaling can go, on the capabilities AI still lacks that are reasonably part of “AGI”, and on the reasons to expect that AI recursive self-improvement will hit diminishing returns, and make a fast takeoff to superintelligence quite unlikely. After that we’ll come back to questions of AI control, alignment, safety, and building an ecosystem that uses AI, among other tools, to guard against, prevent, and repair damage caused by malicious actors with AI.

Much more to come. Enjoy! And subscribe below to be notified for those pieces.