Common AI Narratives are Wrong (Video and Part 1)

AI will be democratized, commoditized, and not monopolized. AGI isn't here yet, and no fast recursive improvement to ASI seems likely. Algorithmic breakthroughs, not scaling, are likely key.

Dear readers: I’m firing up this substack to publish thoughts on the state of AI, clean energy, climate, and how to steer technology to maximize the well being of humanity. You should expect to see much more writing on these topics from me in the neer future. Welcome!

I gave a fun and fast paced talk on how I view AI progress at the Foresight Vision Weekend Puerto Rico recently. I see myself as an AI optimist, yet am skeptical of some of the most radical expectations, both utopian and dystopian, as I explain here. My opinions here are strongly expressed, yet are all subject to change in the face of evidence and data.

First, here’s the video. Below I’ll outline the key points and where I see my expectations of AI in contrast to many of the narratives I most commonly hear.

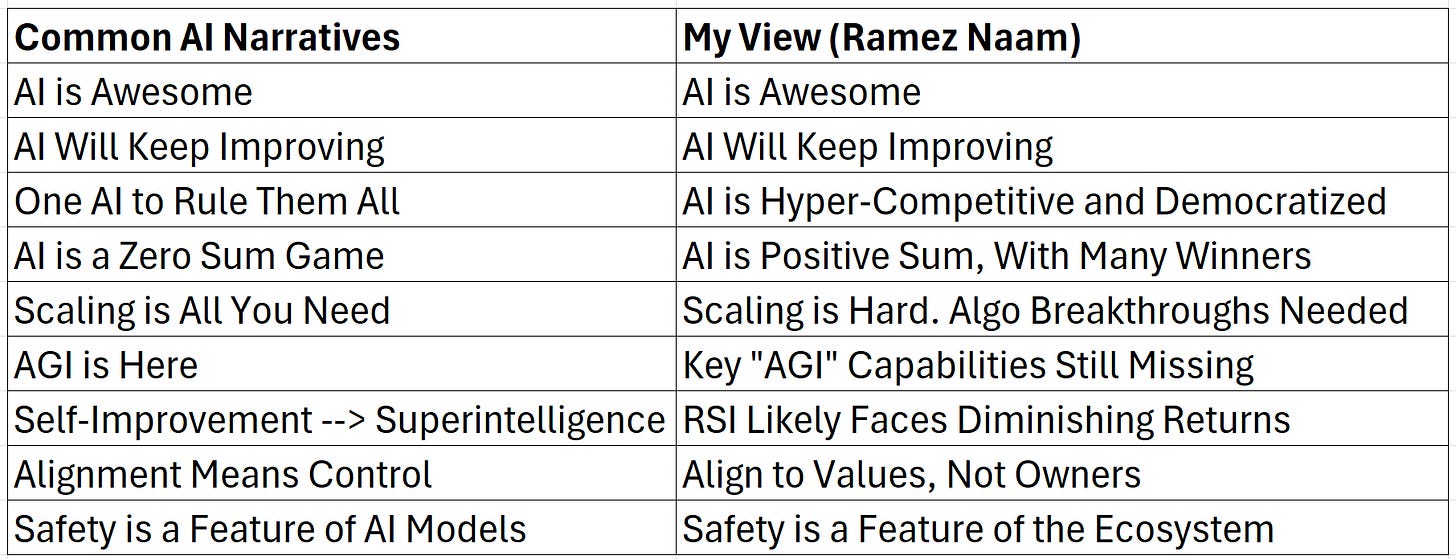

Without further ado, here’s the cheat sheet to my view of the current situation in AI. Again, all of this is subject to change based on evidence.

Today I’ll give a thumbnail sketch on the first four points:

AI is an amazing technology that will bring us benefits.

AI models will keep improving, with no wall in sight (but some challenges).

AI won’t be monopolized. It’ll be hyper-competitive, democratized, and plural.

AI isn’t zero sum, either between customers and AI companies, or even between the US and China.

Over the coming days, I’ll go into depth on the even more contrarian themes:

Scaling is unlikely to get us to super-intelligence. We need algorithmic breakthroughs.

AGI isn’t here today. We’re missing core capabilities that humans have.

Recursive Self Improvement is unlikely to lead to runaway SuperIntelligence

AI alignment isn’t synonymous with AI model control.

AI safety must go beyond the models and become a feature of the ecosystem.

Today let’s hit the earlier points.

1. AI is Awesome

AI is a fairly general purpose technology - a cognitive prosthesis that, among other things, augments our ability to do all sorts of intellectual work. In its broad capabilities and wide impact on society, it’s most analogous to the internet, mobile phones, computers, books, and the printing press.

Like those older technologies, AI will surely bring a host of problems. Every technology throughout history has. And, like other technologies before it, AI is likely to be harnessed - despite some problems - to be a net positive for humanity.

2. AI Will Keep Improving

AI models are improving rapidly before our eyes. There’s no obvious wall to this improvement. There are challenges and limitations of current AI models. There are ways in which continued progress will become more difficult or at least require substantially greater resources. And we also have incredible innovative capabilities, incredible incentives, and incredible resources being applied to AI. I see a gradient of increasing difficulty of improving AI which is being met by ever more clever and better resourced efforts to do exactly that.

Progress will almost certainly continue.

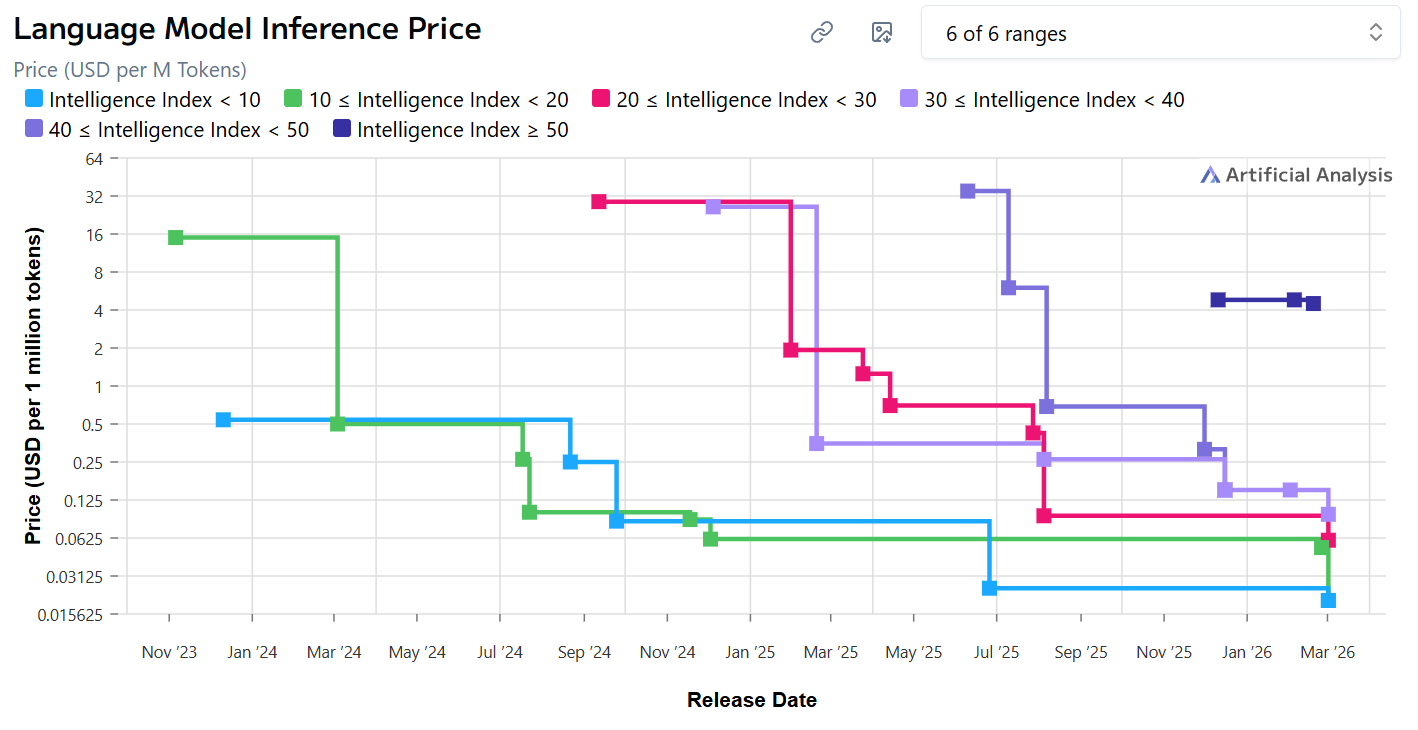

The price of using AI models is also plunging. The price paid for AI inference (using an AI model, not training it) has dropped by as much as 100-fold in less than a year. That is an incredible gift to the users and customers of AI. We have every reason to believe that this trend will continue with each successive wave of new AI models.

3. AI Will Be Democratized, Not Monopolized

In science fiction AI stories, there’s frequently a single super-powerful AI that rules the world, or intends to. AI is portrayed as inherently winner-takes-all. This is convenient from a narrative standpoint. It taps into our anxieties about loss of control over our lives. This also matches, to some extent, a frequent pattern of tech, where natural monopolies such as social networks or operating systems take a huge fraction of the share of a market due to network effects in winner-takes-all or winner-takes-most markets.

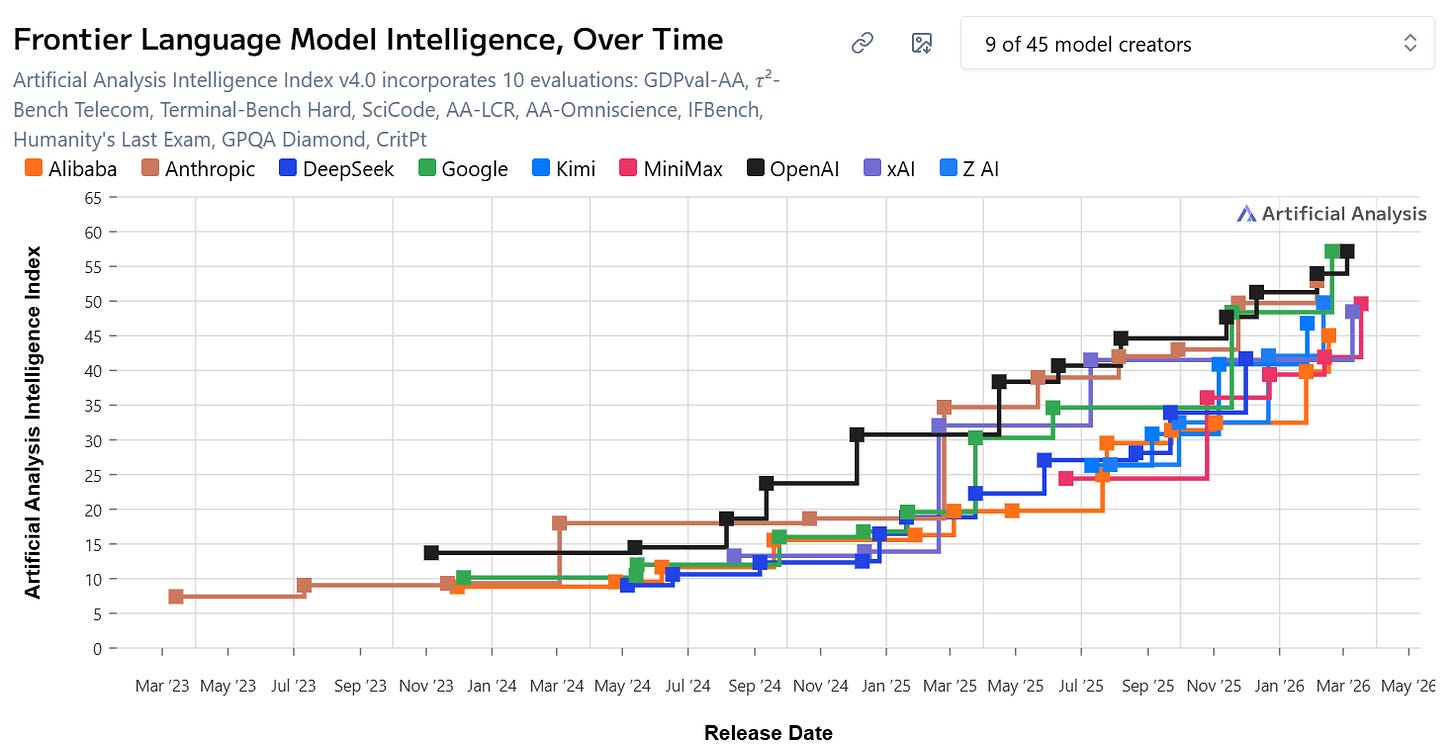

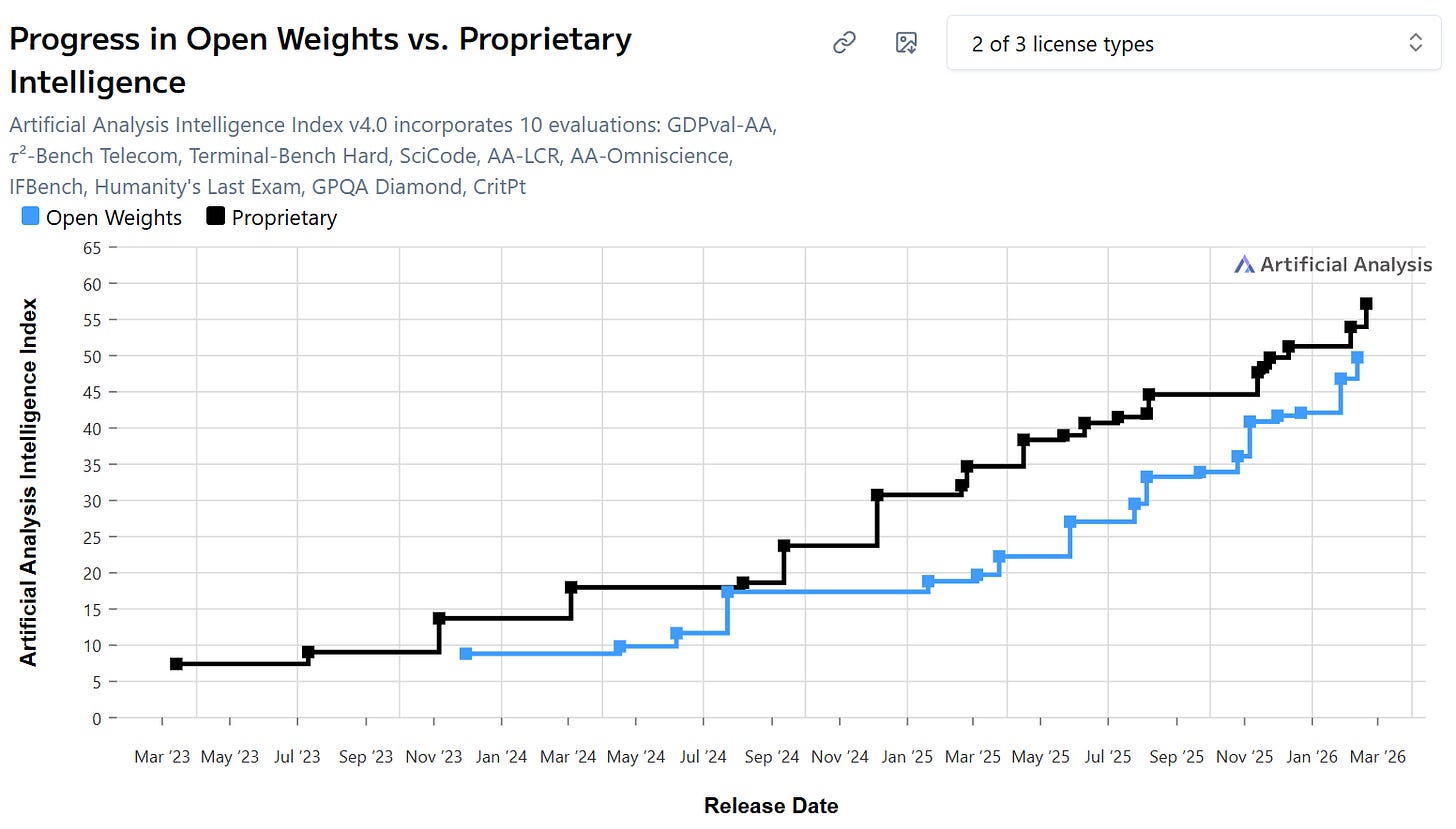

AI doesn’t look winner-takes-all. The leading AI labs are neck-and-neck in quality. Open weight models are nipping at their heels. There are no obvious network effects or flywheels (Amazon’s term for positive feedback loops) for the labs as of now. [This has important business and investment consequences, which I’ll come to in a future post.]

There’s no obvious sustainable advantage for particular nation-states, either.

Instead, we have a world where:

A. No AI lab keeps a lead for long. In the 12 months preceding this blog post, the role of most capable model on AI benchmarks has changed 14 times, among 4 companies. In a 4 week span between mid November and mid December 2025, the top of the leaderboard changed 4 times, an average of once a week. Meanwhile, the leading AI companies continue to drop the prices customers pay.

This is perhaps the most hyper-competitive technology market we’ve ever seen. And crucially, no AI company has demonstrated that their lead - in capabilities, market share, or usage - can be translated into faster progress.

B. Open-weight models are nipping at the heels of proprietary models. Open weight models are models that can be downloaded by anyone, to run on their own compute, and even to be tweaked, modified, or jailbroken. These models, mostly created by Chinese companies, are perhaps three months behind the top proprietary models released by US companies. The best open-weight model as of March 2026 is nearly at par with the best proprietary model as of December 2025.

C. The AI genies are out of the bottle. This in turn means that we must rethink any ideas about controlling or restricting access to AI. Dreams of monopolizing AI and dreams of AI safety achieved through strict control of model capabilities fly in the face of a world where anyone, anywhere, in almost any country, can get access to models better than anyone on Earth had access to just a few months ago.

D. A plural, multi-polar AI world. The world we’re headed for is one of a multitude of AI models, with AI companies spending hundreds of billions of dollars to make models ever better, faster, and cheaper. While the AI labs will surely reap enormous revenues, the fierce competition means that the value will accrue mostly to users of AI. This decentralized world also makes AI control (for good or ill) quite difficult. This has some consequences that we’ll consider below and in future posts.

All said, I’m incredibly happy to see this future coming rather than a future where AI is dominated by any one person, corporation, or nation.

4. AI Is Mostly Positive Sum Between Companies & Customers, and Between Nations

There’s a related perception that AI operates in a zero sum world. AI labs are sometimes perceived as being extractive rather than generative (see what I did there?). And even moreso, the AI competition between companies in the US and China is almost always framed as zero sum, with the assumption that if one nation wins, the other loses.

This flies in the face of the bulk of what we know about both technology and scientific research.

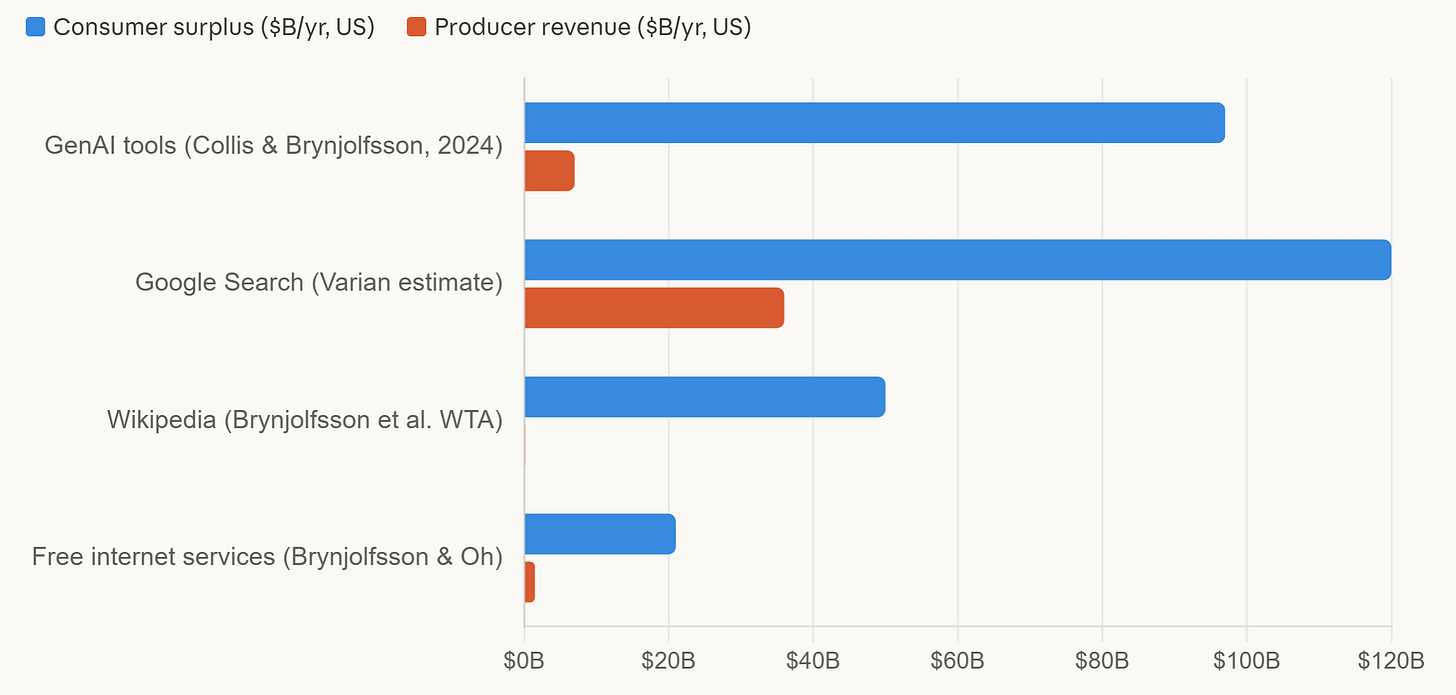

Technology companies can amass huge fortunes, which makes it easy to think of them as profiting at the expense of customers or society. (And of course they do cause some harms.) Yet research consistently finds that the bulk of the value of information technology goes to the users of the technology, and not to the creators or providers of it. For example, Erik Brynjolfsson et al found that in mid 2025, for every dollar of revenue AI companies were bringing in, they were creating roughly $14 of consumer value. (Measured by how much you would have to pay a user of AI to give it up.) That’s consistent with past studies of numerous technologies finding that customers get more value than is captured by the companies that create and sell those technologies.

That shouldn’t surprise us: The world has become a better place in large part because of better technology, and its clear that individuals are the big winners.

This should make us think twice about the conception that AI companies are rapacious and extractive.

It should also cause us to reconsider the zero-sum framing of AI competition between the US and China. The US / China AI competition is frequently talked about with the implicit or explicit assumption that, if China builds better and better AI technology, the US will suffer.

There’s some reason to be concerned about advancing AI technology in China. AI is a dual-use technology. It can be used as a general purpose consumer technology, like search or the internet. It can be used to develop better drugs or materials or other advances that improve our lives. And it can also be used for surveillance, hacking, propoganda, and on the battlefield. We shouldn’t be naive: China (and other nations) will use AI in intelligence gathering and as a weapon. (Ryan Fedasiuk has some excellent thoughts on what to do about this.)

Most AI use is commercial, though, and much of that will have benefits for consumers worldwide. In China in particular, estimates are that 80-90% of all AI usage is in the private sector. That commercial usage is likely to generate consumer surplus. And some of that consumer surplus will reach the US and the rest of the world. In particular, when AI is used to accelerate innovation - to design better life-saving drugs or new materials or clean energy technologies, for example - more than 90% of the benefits will go to customers, and those customers are global.

As a simple example, China has recently passed the US in early stage drug development. Pharma is a specific sector where more than 90% of the benefits are reaped by consumers, not producers. Anyone, anywhere in the world who suffers from a disease that’s treatable by one of these drugs benefits. That includes Americans. And China is a leader in using AI for drug development.

A second positive sum factor is the effect on AI research itself. Chinese researchers now produce more papers about AI than the US, UK, and EU combined. US papers remain the most highly cited in AI. But it’s clear that research in China isn’t just advancing AI in China - it’s resulting in publications that have the potential to improve AI worldwide.

National security risks are real. And AI - like almost all technology - is fundamentally positive sum.

That’s it for today. Enjoy the talk. In the coming days we’ll come back to short descriptions of the remaining 5 points:

Scaling isn’t all you need. Scaling is unlikely to get us to super-intelligence. We need algorithmic breakthroughs.

AGI isn’t here today. We’re missing core capabilities that humans have.

ASI isn’t near. Recursive Self Improvement is unlikely to lead to runaway SuperIntelligence

Alignment != control. AI alignment isn’t synonymous with AI model control.

Safety is at the ecosystem layer. AI safety must go beyond making individual models safe and become a feature of the ecosystem.

Until then, if you found this valuable, please consider sharing it and subscribing.